Join the Menttor community

Track progress and collaborate on roadmaps with students worldwide.

The Surface Code

Dennis, E., Kitaev, A., Landahl, A., & Preskill, J. (2002). Topological quantum memory. Journal of Mathematical Physics, 43(9), 4452-4505.

Read Original Paper

The transition from the theoretical elegance of the toric code to the practical reality of the surface code addressed a fundamental constraint in quantum hardware engineering. While the original toric code required periodic boundary conditions—effectively demanding that a lattice of qubits be physically wrapped around a torus—the researchers at Caltech and Microsoft proposed a planar alternative that could exist on a flat two-dimensional sheet. This shift was necessary to align quantum error correction with the planar fabrication processes of modern superconducting and ion-trap architectures. It proved that the topological protection afforded by a torus could be preserved on a finite plane by carefully managing its boundaries.

Planar Boundaries and Logical Encoding

Rough and Smooth Edges

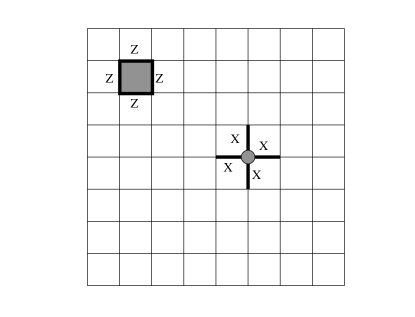

The mechanism of the surface code relies on the introduction of two distinct types of boundaries: rough (or plaquette) edges and smooth (or site) edges. On a square lattice where qubits reside on the edges, a rough edge is one where the stabilizer operators are truncated to three-qubit plaquettes, while a smooth edge truncates the vertex operators. This asymmetry is what allows for the encoding of a logical qubit on a simply connected surface. A logical operation is defined as a chain of errors that stretches from one rough edge to the opposite rough edge, while a logical operation is a chain of errors connecting the two smooth edges.

Syndrome Measurement and Local Constraints

Syndrome measurement in the surface code is performed by measuring local stabilizer operators—the vertex and plaquette operators—each involving only four neighboring qubits. This locality is the primary figure of merit for the architecture, as it ensures that the complexity of the error correction does not scale with the size of the system. When a physical error occurs, it creates a pair of "defects" or monopoles at the ends of an error chain. Because the stabilizers commute, these defects can be tracked over time to form a history of the system's evolution. The recovery process then involves a classical matching algorithm that identifies the most probable error chains to "annihilate" these defects, effectively returning the system to its protected ground state.

The Shift to Local Error Correction

Thresholds and Hardware Feasibility

The global abstraction enabled by this work was the realization that a fault-tolerant accuracy threshold could be achieved using strictly local interactions. Before the surface code, it was widely assumed that error correction required complex, non-local wiring to concatenate different levels of encoding. By proving that a 2D lattice with only nearest-neighbor gates could reach a threshold of nearly 1%, the researchers provided a viable path for large-scale quantum hardware. This moved the field away from the "heroic" effort of building a perfect qubit toward the "engineering" challenge of building a sufficiently large array of mediocre qubits.

Topological Memory as a Physical Reality

This shift transformed the concept of a quantum memory from a mathematical ideal into a physical reality. It suggested that the most effective way to build a stable quantum system is not to eliminate noise at the source, but to create a medium where information is stored in the non-local topology of the state. The surface code remains the foundational blueprint for the majority of the world's leading quantum hardware efforts, though the challenge of performing logical gates between distant patches of code remains an open area of research. Whether the overhead of the surface code—requiring thousands of physical qubits for a single logical qubit—can be reduced through more efficient topological constructions is a central question for the next decade of development.

Dive Deeper

Topological Quantum Memory (arXiv)

arXiv • article

Explore ResourceSurface Codes: Towards Practical Error Correction

arXiv • article

Explore Resource