Join the Menttor community

Access accelerated AI inference, track progress, and collaborate on roadmaps with students worldwide.

ACT: Action Chunking Transformer

Zhao, T. Z., Kumar, V., Levine, S., & Finn, C. (2023). Learning fine-grained bimanual manipulation with low-cost hardware. arXiv preprint arXiv:2304.13705.

Read Original Paper

The 2023 'Action Chunking with Transformers' (ACT) paper addressed the difficulty of learning complex, fine-grained robotic tasks from a small number of human demonstrations. While traditional imitation learning often suffers from 'compounding errors'—where a small mistake in one step leads to total failure—researchers at Stanford and Meta proposed a method that predicts entire 'chunks' of future actions simultaneously. It was a shift from step-by-step prediction to sequence-level planning, allowing robots to perform delicate tasks like opening a marker or using a slotted spoon with high reliability.

The Horizon Reduction Shift

The ACT architecture: using a Transformer CVAE to predict action chunks for robotic control.

The primary technical shift in ACT was the move from single-step action prediction to 'action chunking,' where the model predicts a sequence of $k$ future joint positions (typically $k=100$) simultaneously. In standard imitation learning, a robot makes independent decisions at every timestep, which leads to 'compounding errors'—small mistakes that drift the robot away from the expert's path until it fails. By predicting and executing chunks, the robot reduces the 'effective horizon' of the task by a factor of $k$. Instead of making 1,000 risky decisions over a few seconds, it makes only 10. This approach proved that temporal consistency is as important as spatial accuracy in high-precision tasks like opening a marker or slotting a battery. It revealed that the most robust robotic policies are those that plan at the level of full motions rather than reactive steps.

The Transformer CVAE Architecture

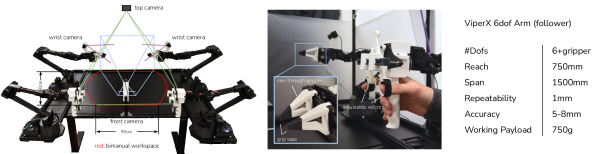

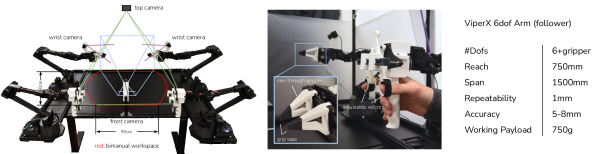

To handle the multi-modality of human demonstrations—where different people might perform the same task with different speeds or styles—ACT employs a Conditional Variational Autoencoder (CVAE) with a Transformer backbone. During training, an encoder processes the entire sequence of expert actions to produce a 'style' latent variable, which captures the nuances of that specific demonstration. The policy itself is a Transformer decoder that combines this latent variable with visual features from four camera views and current joint positions. By treating action generation as a sequence modeling problem, the model can capture the non-Markovian nature of human motion, such as intentional pauses or subtle adjustments. It revealed that high-fidelity imitation requires a model capable of understanding the 'intent' behind a sequence rather than just the immediate state of the environment.

Temporal Ensembling for Smoothness

A critical technical challenge in executing action chunks is the 'jerky' movement that occurs at the boundaries between one chunk and the next. The researchers solved this through 'temporal ensembling,' where the policy is queried at every single timestep, producing multiple overlapping predictions for the current and future positions. These predictions are then combined using an exponential weighted average, giving more weight to the most recent (and thus most informed) predictions. This process ensures a continuous, smooth trajectory while allowing the robot to remain reactive to environmental changes. It proved that the 'pauses' seen in earlier robotic systems were not a hardware limitation, but a software failure to manage the transition between planned sequences of motion.

The ALOHA System and 50Hz Data

The reasoning behind ACT was to prove that sophisticated manipulation does not require million-dollar hardware. The researchers developed ALOHA, a bimanual teleoperation setup built from low-cost, off-the-shelf components. A key finding was the importance of data frequency; they demonstrated that collecting and processing data at 50Hz is essential for fine-grained manipulation, as lower frequencies (like 5Hz) resulted in a 62% slowdown in human task completion. By training on just 50 human demonstrations per task, the ACT algorithm achieved high success rates on tasks previously thought to require thousands of examples. This revealed that the bottleneck in robotics is often the quality and temporal resolution of the training data rather than the raw precision of the motors.

The Generalization Challenge

The success of ACT highlights a 'generalization gap' where a robot can master a task in a specific environment but fails when the lighting or the position of objects changes slightly. This raises a fundamental question: how many demonstrations are needed for a robot to truly 'understand' the physics of a task rather than just mimicking a specific sequence of movements? It remains to be seen if the principles of action chunking can be combined with large-scale pre-training—similar to how language models are built—to create robots that are as adaptable as they are precise. It suggests that the path to general-purpose robots requires a move away from isolated task learning toward a more holistic foundation of physical reasoning.

Dive Deeper

ACT Project Page

Stanford • docs

Explore ResourceACT on GitHub

GitHub • code

Explore ResourceACT Paper on arXiv

arXiv • article

Explore Resource