Join the Menttor community

Access accelerated AI inference, track progress, and collaborate on roadmaps with students worldwide.

GraphCast: Global Weather AI

Lam, R., Sanchez-Gonzalez, A., Willson, C., et al. (2023). Learning skillful medium-range global weather forecasting. Science, 382(6677), ado3910.

Read Original Paper

The 2023 'GraphCast' paper from Google DeepMind introduced a radical shift in meteorology by replacing the explicit physical equations of traditional weather models with a data-driven graph neural network. For decades, global weather forecasting relied on Numerical Weather Prediction (NWP), which uses massive supercomputers to solve fluid dynamics equations over a grid of billions of points. This process is computationally expensive and slow, often taking hours to produce a single 10-day forecast. GraphCast demonstrated that by treating the atmosphere as a global message-passing graph, a machine can learn to predict the future of the weather directly from historical data. It proved that the complexity of the Earth's climate can be captured more efficiently through learned representations than through manually defined physical formulas.

The Message-Passing Shift

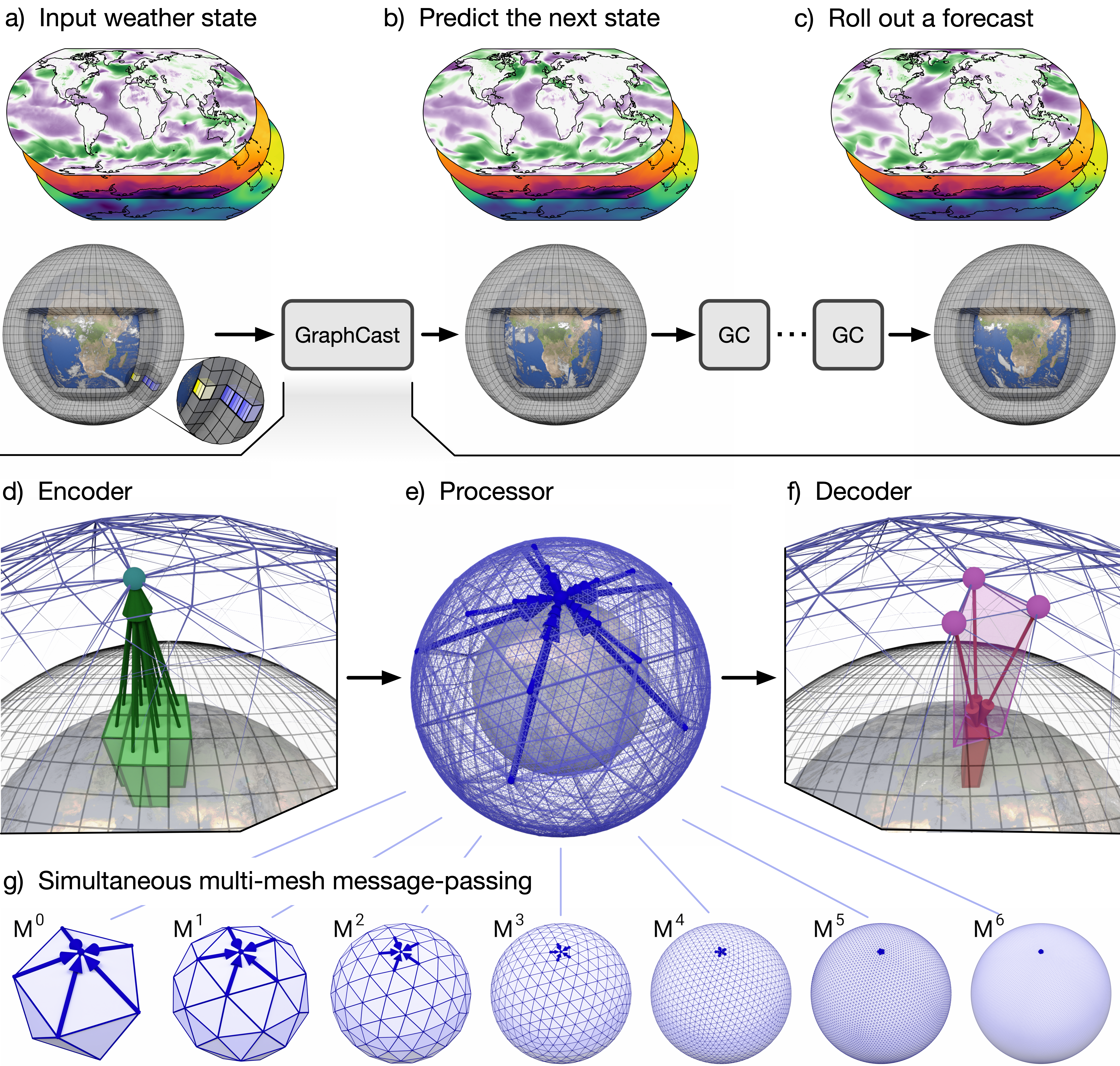

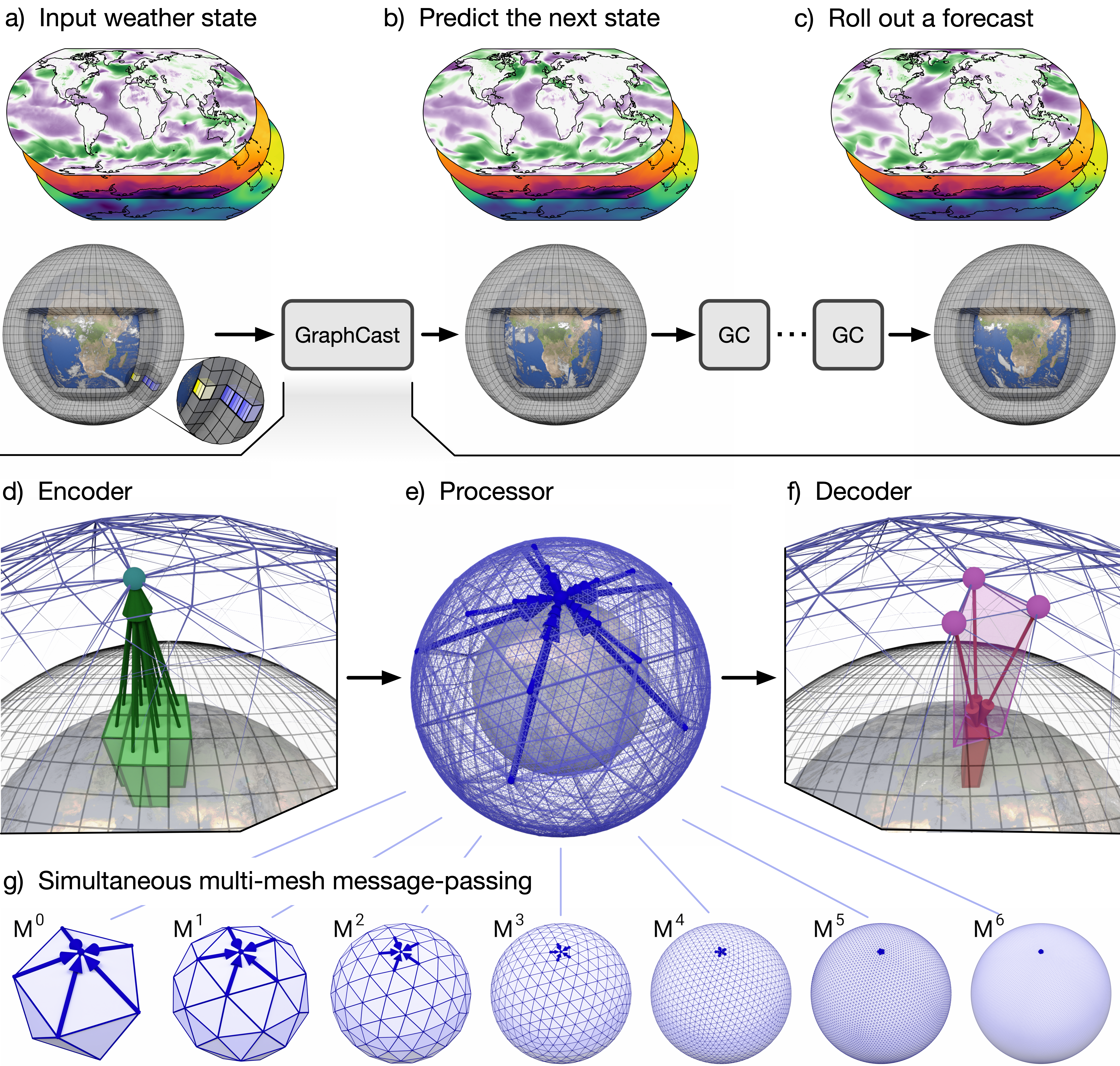

The GraphCast architecture: using an encoder-processor-decoder GNN for global forecasting.

The primary technical shift in GraphCast was the move from solving partial differential equations to a 'message-passing' objective on a global scale. Traditional models calculate the state of the atmosphere by simulating the local physical interactions between adjacent grid cells. In contrast, GraphCast uses a Graph Neural Network (GNN) to propagate information across a multi-mesh representation of the Earth. This architecture allows the model to capture both local weather patterns and long-range teleconnections—where a change in the Pacific Ocean affects the weather in Europe—in a single, unified pass. This approach revealed that the most effective way to model the atmosphere is not as a series of isolated grid points, but as a deeply interconnected network of influences where information flows dynamically across the globe.

Multi-Mesh and Spatial Homogeneity

How GraphCast achieves its high resolution of 0.25 degrees lies in its use of a 'multi-mesh' graph derived from a refined icosahedron. Standard latitude-longitude grids suffer from a 'pole problem' where grid points cluster together at the top and bottom of the world, creating mathematical instabilities and computational waste. By using a multi-mesh, GraphCast ensures that its nodes are distributed almost uniformly across the Earth's surface. This spatially homogeneous representation allows the GNN to process the entire globe with consistent resolution and efficiency. It proved that the 'shape' of our data representations is as important as the algorithms we run on them, and that breaking free from standard coordinate systems is essential for modeling spherical environments like the Earth.

Efficiency and Real-Time Forecasting

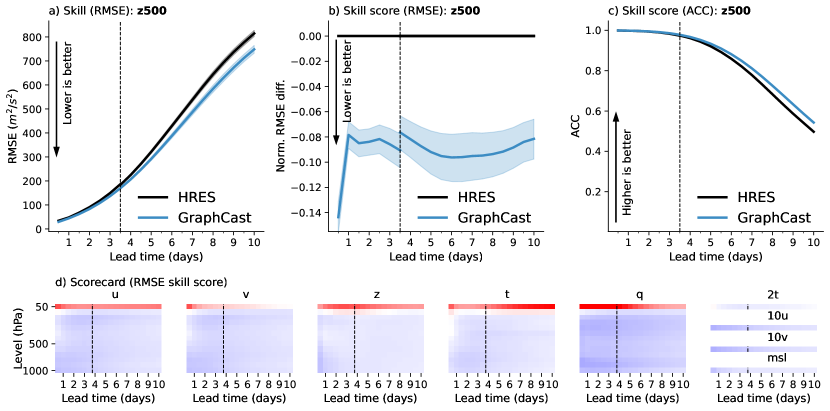

GraphCast performance scorecard: outperforming traditional NWP on 90% of global variables.

The most immediate impact of GraphCast was its massive leap in computational efficiency, producing a 10-day global forecast in less than a minute on a single TPU. Traditional supercomputing clusters require thousands of cores and over an hour to perform the same task. This finding revealed that the bottleneck in weather prediction was not a lack of physical understanding, but the inefficiency of the classical simulation paradigm. By learning from four decades of historical weather data, GraphCast can 'shortcut' the complex calculations of NWP, providing accurate forecasts with a fraction of the energy and time. This shift toward 'inference-only' forecasting suggests that the future of environmental monitoring will be dominated by models that prioritize rapid response and data-driven adaptation.

Weather as a Global Graph

The success of GraphCast suggests that many complex, high-dimensional physical systems can be simplified through the lens of graph-based learning. By outperforming the industry-standard HRES model on over 90% of verification targets, it proved that machine learning is no longer a secondary tool in meteorology, but a primary driver of accuracy. This reveals a fundamental insight: the 'physics' of the world are encoded within the data itself, and a sufficiently deep model can recover these rules without explicit instruction. It raises the question of whether other planetary-scale challenges—such as ocean modeling or climate change projections—can also be solved by treating the Earth as a giant, learnable graph. It suggested that the path to understanding our planet lies in bridging the gap between physical law and statistical pattern.

Dive Deeper

GraphCast Paper in Science

Science • article

Explore ResourceDeepMind GraphCast Blog

DeepMind • article

Explore ResourceGraphCast Implementation on GitHub

GitHub • code

Explore Resource