Join the Menttor community

Access accelerated AI inference, track progress, and collaborate on roadmaps with students worldwide.

DDPM: Diffusion Models

Ho, J., Jain, A., & Abbeel, P. (2020). Denoising diffusion probabilistic models. Advances in neural information processing systems, 33, 6840-6851.

Read Original Paper

The 2020 paper on Denoising Diffusion Probabilistic Models (DDPM) introduced a new way to generate images by reversing a process of gradual destruction. For years, generative models like GANs had dominated the field, but they were often unstable and difficult to scale. Jonathan Ho and his team proposed that instead of competing networks, a model could learn to reconstruct an image by systematically removing noise. It was a shift toward viewing generation as a steady, iterative refinement of random signals.

The Forward and Reverse Process

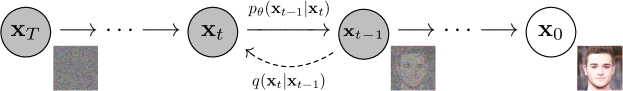

The directed graphical model showing the step-by-step diffusion process.

The technical shift was the definition of a 'forward' and 'reverse' diffusion process. In the forward pass, noise is gradually added to an image until it becomes pure random pixels. The model is then trained on the reverse task: given a noisy image, predict how to remove that noise to get back to the original. As the authors noted, 'A diffusion probabilistic model is a parameterized Markov chain trained using variational inference to produce samples matching the data after finite time.' By breaking generation into hundreds of small, predictable steps, the model gains incredible stability.

The Denoising Objective

The reasoning behind DDPM was that predicting small changes in noise is a much easier mathematical problem than generating a complex image in a single pass. The researchers found that by optimizing a simple 'denoising' objective, the model could produce high-quality samples that rivaled those from GANs. This proved that complex distributions could be modeled through a sequence of simple, local decisions. It reveals that generation is essentially the act of finding order within chaos, one step at a time.

The Speed Bottleneck

While DDPM produced excellent results, the iterative nature of the reverse process made sampling very slow, requiring hundreds or thousands of steps to generate a single image. This highlighted a new trade-off in generative AI: while diffusion models are easier to train and more stable than GANs, they are much more computationally expensive at inference time. It raises the question of whether the next leap in generation will involve finding ways to skip these steps without losing the clarity of the result.

Dive Deeper

Diffusion Models Paper

arXiv • article

Explore ResourceLilian Weng: Diffusion

Lilian Weng • article

Explore Resource