Join the Menttor community

Access accelerated AI inference, track progress, and collaborate on roadmaps with students worldwide.

GAN: Generative Adversarial Nets

Goodfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., ... & Bengio, Y. (2014). Generative adversarial nets. Advances in neural information processing systems, 27.

Read Original Paper

The 2014 proposal of Generative Adversarial Networks (GANs) by Ian Goodfellow and his colleagues introduced a paradigm shift in generative modeling by framing the problem as a structural competition. Before this, generating realistic data like images required complex probabilistic estimations or heavy approximations to capture the underlying distribution of the data. Goodfellow argued that instead of explicitly defining what 'good' data looks like through mathematical formulas, a model could learn to generate it by attempting to fool a second, competing model. This shifted the focus from statistical estimation to a zero-sum game between two neural networks, suggesting that complexity in artificial systems can emerge from the tension of opposing objectives.

The Adversarial Framework

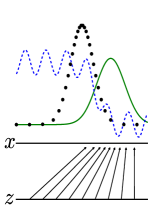

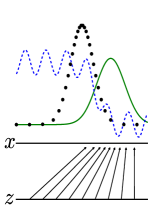

Visualization of the adversarial training process where the generator distribution aligns with the data distribution.

The framework operates as a game between a Generator and a Discriminator, each with a distinct and conflicting goal. The Generator is tasked with creating synthetic samples that are indistinguishable from the training data, while the Discriminator acts as a detective, attempting to correctly identify which samples are real and which are fakes produced by its opponent. As the researchers famously noted, the relationship is analogous to a team of counterfeiters attempting to produce fake currency and a police force trying to detect it. This competition drives a continuous improvement loop: as the Discriminator becomes more adept at spotting fakes, the Generator is forced to produce increasingly realistic outputs to survive. This revealed that the most effective way to teach a machine to create is to give it an opponent that can recognize its failures.

Learning through Error

The primary technical shift was the use of backpropagation to train both networks simultaneously within a unified minimax objective function. Because the Discriminator is itself a neural network, it provides a differentiable signal—a gradient—that tells the Generator exactly how to adjust its internal parameters to make its outputs more realistic. This eliminated the need for the Markov chains or other complex sampling methods that had limited earlier generative models, allowing the system to scale to high-dimensional data like photographs. The system learns to map a simple source of random noise into a complex, high-fidelity data distribution, proving that the appearance of order can be generated from chaos through a series of iterative, error-driven adjustments.

Instability and Equilibrium

Despite their power, GANs revealed a fundamental challenge in maintaining the fragile equilibrium between the two competing networks. If the Discriminator becomes too proficient too quickly, the Generator receives a 'vanishing' gradient and cannot learn; conversely, if the Generator finds a specific output that the Discriminator consistently fails to identify—a phenomenon known as mode collapse—the system stops exploring the full diversity of the data. This instability suggests that optimizing a complex system is not just about moving toward a single goal, but about managing the balance between competing forces. It raises the question of whether true intelligence in artificial systems is better achieved through direct, top-down optimization or through the emergent, often unpredictable properties of adversarial interaction.

Dive Deeper

GANs Explained

Machine Learning Mastery • article

Explore ResourceIan Goodfellow Interview

MIT Tech Review • article

Explore Resource